Big Data Duplication - don't do it

Big Data duplication - don't do it Why do data analytics and big data projects that create massive unnecessary cost overheads in the form of data...

1 min read

Vinay Samuel

:

Jun 13, 2019 9:33:00 PM

Vinay Samuel

:

Jun 13, 2019 9:33:00 PM

A Virtual Data Warehouse (VDW) enables a join of data across many data stores and networks or clouds for creating the views that the BI tools need. This is a step-change in the data platform and integration world, where the old approach (building a data warehouse or data lake) means that data has to be moved, re-structured, or transformed and ordered before any value can be created.

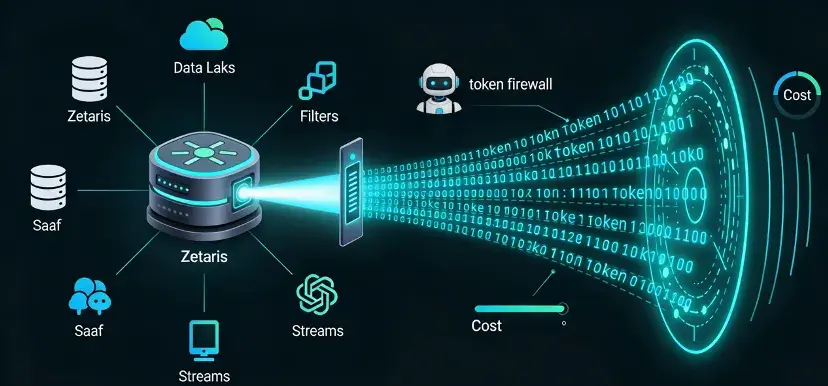

In the traditional data lake or data warehouse approach (the centralized model), data quality issues are created by mistakes being made during the costly physical data integration project. Zetaris: The Networked Data Platform has solved these massive cost inefficiencies and technical barriers to analytics at scale. Zetaris: The Networked Data Platform does not move or duplicate data for analysis. Zetaris: The Networked Data Platform constructs a Virtual Data Warehouse.

Using Zetaris: The Networked Data Platform, BI tools can be massively extended in terms of the breadth of the data that can be analysed: moving from a limited set within the tool or data warehouse to (potentially) all the data across the Internet that is relevant and available. Data in the stream can be instantly aggregated into dynamic views and combined with historical data in many different databases, files, etc. to form a Data Mesh to support a high-value view for your tools. And, by leveraging Zetaris: The Networked Data Platform’s mixed-streaming analytics capability (the ability to analyse data in the stream with data in the warehouse or lake in real-time) the latency of the data is turned from intra-day (at best) to near real-time.

The video below shows how a Zetaris Data Mesh is connected with Tableau. In this video, a pre-built Virtual Data Warehouse deployed with the Zetaris Data Mesh technology is used by Tableau as its data source.

Big Data duplication - don't do it Why do data analytics and big data projects that create massive unnecessary cost overheads in the form of data...

Being in the moment with your customer Over the last few years, Zetaris has been helping clients get closer to their customers by building deep...

Zetaris minimizes token costs in Agentic AI and RAG by resolving as much of the business question as possible inside its federated data engine, then...